EP1205908B1 - Pronunciation of new input words for speech processing - Google Patents

Pronunciation of new input words for speech processing Download PDFInfo

- Publication number

- EP1205908B1 EP1205908B1 EP01309137A EP01309137A EP1205908B1 EP 1205908 B1 EP1205908 B1 EP 1205908B1 EP 01309137 A EP01309137 A EP 01309137A EP 01309137 A EP01309137 A EP 01309137A EP 1205908 B1 EP1205908 B1 EP 1205908B1

- Authority

- EP

- European Patent Office

- Prior art keywords

- sub

- word

- sequence

- word units

- units

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Expired - Lifetime

Links

Images

Classifications

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS OR SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING; SPEECH OR AUDIO CODING OR DECODING

- G10L15/00—Speech recognition

- G10L15/06—Creation of reference templates; Training of speech recognition systems, e.g. adaptation to the characteristics of the speaker's voice

-

- G—PHYSICS

- G10—MUSICAL INSTRUMENTS; ACOUSTICS

- G10L—SPEECH ANALYSIS OR SYNTHESIS; SPEECH RECOGNITION; SPEECH OR VOICE PROCESSING; SPEECH OR AUDIO CODING OR DECODING

- G10L15/00—Speech recognition

- G10L15/06—Creation of reference templates; Training of speech recognition systems, e.g. adaptation to the characteristics of the speaker's voice

- G10L15/063—Training

- G10L2015/0631—Creating reference templates; Clustering

Definitions

- the present invention relates to the determination of phoneme or phoneme like models for words or commands which can be added to a word/command dictionary and used in speech processing related applications, such as speech recognition.

- the invention particularly relates to the generation of canonical and non-canonical phoneme sequences which represent the pronunciation of input words, which sequences can be used in speech processing applications.

- speech recognition systems are becoming more and more popular due the increased processing power available to perform the recognition operation.

- Most speech recognition systems can be classified into small vocabulary systems and large vocabulary systems.

- the speech recognition engine usually compares the input speech to be recognised with acoustic patterns representative of the words known to the system.

- the reference patterns usually represent phonemes of a given language. In this way, the input speech is compared with the phoneme patterns to generate a sequence of phonemes representative of the input speech.

- a word decoder is then used to identify words within the sequence of phonemes using a word to phoneme dictionary.

- a problem with large vocabulary speech recognition systems is that if the user speaks a word which is not in the word dictionary, then a mis-recognition will occur and the speech recognition system will output the word or words which sound most similar to the out of vocabulary word actually spoken.

- This problem can be overcome by providing a mechanism which allows users to add new word models for out of vocabulary words. To date, this has predominantly been achieved by generating acoustic patterns representative of the out of vocabulary words. However, this requires the speech recognition system to match the input speech with two different types of model - phoneme models and word models, which slows down the recognition process. Other systems allow the user to add a phonetic spelling to the word dictionary in order to cater for out of vocabulary words.

- GB-A-2349260 describes a system for generating new reference models for adding to a speech recognition dictionary from three or more training signals.

- the system simultaneously compares and aligns the three or more training signals with each other and, from the alignment results, generates a reference model representative of the training signals.

- One aim of the present invention is to provide an alternative technique for generating a phoneme or phoneme-like sequence representative of new words to be added to a word dictionary or command dictionary which may be used in, for example, a speech recognition system.

- Embodiments of the present invention can be implemented using dedicated hardware circuits, but the embodiment that is to be described is implemented in computer software or code, which is run in conjunction with a personal computer.

- the software may be run in conjunction with a workstation, photocopier, facsimile machine, personal digital assistant (PDA), web browser or the like.

- Figure 1 shows a personal computer (PC) 1 which is programmed to operate an embodiment of the present invention.

- a keyboard 3, a pointing device 5, a microphone 7 and a telephone line 9 are connected to the PC 1 via an interface 11.

- the keyboard 3 and pointing device enable the system to be controlled by a user.

- the microphone 7 converts the acoustic speech signal of the user into an equivalent electrical signal and supplies this to the PC 1 for processing.

- An internal modem and speech receiving circuit may be connected to the telephone line 9 so that the PC 1 can communicate with, for example, a remote computer or with a remote user.

- the program instructions which make the PC 1 operate in accordance with the present invention may be supplied for use with the PC 1 on, for example, a storage device such as a magnetic disc 13 or by downloading the software from a remote computer over, for example, the Internet via the internal modem and telephone unit 9.

- this sequence of phonemes are input, via a switch 20, to the word decoder 21 which identifies words within the generated phoneme sequence by comparing the phoneme sequence with those stored in a word to phoneme dictionary 23.

- the words 25 output by the word decoder 21 are then used by the PC 1 to either control the software applications running on the PC 1 or for insertion as text in a word processing program running on the PC 1.

- the speech recognition system 14 In order to be able to add words to the word to phoneme dictionary 23, the speech recognition system 14 also has a training mode of operation. This is activated by the user applying an appropriate command through the user interface 27 using the keyboard 3 or the pointing device 5. This request to enter the training mode is passed to the control unit 29 which causes the switch 20 to connect the output of the speech recognition engine 17 to the input of a word model generation unit 31. At the same time, the control unit 29 outputs a prompt to the user, via the user interface 27, to provide several renditions of the word to be added. Each of these renditions is processed by the pre-processor 15 and the speech recognition engine 17 to generate a plurality of sequences of phonemes representative of a respective rendition of the new word.

- sequences of phonemes are input to the word model generation unit 31 which processes them to identify the most probable phoneme sequence which could have been mis-recognised as all the training examples and this sequence is stored together with a typed version of the word input by the user, in the word to phoneme dictionary 23.

- the control unit 29 After the user has finished adding words to the dictionary 23, the control unit 29 returns the speech recognition system 14 to its normal mode of operation by connecting the output of the speech recognition engine back to the word decoder 21 through the switch 20.

- FIG. 3 shows in more detail the components of the word model generation unit 31 discussed above.

- a memory 41 which receives each phoneme sequence output from the speech recognition engine 17 for each of the renditions of the new word input by the user.

- the phoneme sequences stored in the memory 41 are applied to the dynamic programming alignment unit 43 which, in this embodiment, uses a dynamic programming alignment technique to compare the phoneme sequences and to determine the best alignment between them.

- the alignment unit 43 performs the comparison and alignment of all the phoneme sequences at the same time.

- the identified alignment between the input sequences is then input to a phoneme sequence determination unit 45, which uses this alignment to determine the sequence of phonemes which matches best with the input phoneme sequences.

- each phoneme sequence representative of a rendition of the new word can have insertions and deletions relative to this unknown sequence of phonemes which matches best with all the input sequences of phonemes.

- Figure 4 shows a possible matching between a first phoneme sequence (labelled d 1 i , d 1 i+1 , d 1 i+2 ”) representative of a first rendition of the new word, a second phoneme sequence (labelled d 2 j , d 2 j+1 , d 2 j+2 ”) representative of a second rendition of the new word and a sequence of phonemes (labelled p n , p n+1 , P n+2 ”) which represents a canonical sequence of phonemes of the text which best matches the two input sequences.

- the dynamic programming alignment unit 43 must allow for the insertion of phonemes in both the first and second phoneme sequences (represented by the inserted phonemes d 1 i+3 and d 2 j+1 ) as well as the deletion of phonemes from the first and second phoneme sequences (represented by phonemes d 1 i+1 and d 2 j+2 , which are both aligned with two phonemes in the canonical sequence of phonemes), relative to the canonical sequence of phonemes.

- dynamic programming is a technique which can be used to find the optimum alignment between sequences of features, which in this embodiment are phonemes.

- the dynamic programming alignment unit 43 calculates the optimum alignment by simultaneously propagating a plurality of dynamic programming paths, each of which represents a possible alignment between a sequence of phonemes from the first sequence (representing the first rendition) and a sequence of phonemes from the second sequence (representing the second rendition). All paths begin at a start null node which is at the beginning of the two input sequences of phonemes and propagate until they reach an end null node, which is at the end of the two sequences of phonemes.

- Figures 5 and 6 schematically illustrate the alignment which is performed and this path propagation.

- Figure 5 shows a rectangular coordinate plot with the horizontal axis being provided for the first phoneme sequence representative of the first rendition and the vertical axis being provided for the second phoneme sequence representative of the second rendition.

- the start null node ⁇ s is provided at the top left hand corner and the end null node ⁇ e is provided at the bottom right hand corner.

- the phonemes of the first sequence are provided along the horizontal axis and the phonemes of the second sequence are provided down the vertical axis.

- Figure 6 also shows a number of lattice points, each of which represents a possible alignment (or decoding) between a phoneme of the first phoneme sequence and a phoneme of the second phoneme sequence.

- lattice point 21 represents a possible alignment between first sequence phoneme d 1 3 and second sequence phoneme d 2 1 .

- Figure 6 also shows three dynamic programming paths m 1 , m 2 and m 3 which represent three possible alignments between the first and second phoneme sequences and which begin at the start null node ⁇ s and propagate through the lattice points to the end null node ⁇ e .

- the dynamic programming alignment unit 43 keeps a score for each of the dynamic programming paths which it propagates, which score is dependent upon the overall similarity of the phonemes which are aligned along the path. Additionally, in order to limit the number of deletions and insertions of phonemes in the sequences being aligned, the dynamic programming process places certain constraints on the way in which each dynamic programming path can propagate.

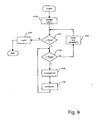

- Figure 7 shows the dynamic programming constraints which are used in this embodiment.

- a dynamic programming path ends at lattice point (i,j), representing an alignment between phoneme d 1 i of the first phoneme sequence and phoneme d 2 j of the second phoneme sequence

- that dynamic programming path can propagate to the lattice points (i+1,j), (i+2,j), (i+3,j), (i,j+1), (i+1,j+1), (i+2,j+1), (i,j+2), (i+1,j+2) and (i,j+3).

- the dynamic programming alignment unit 78 keeps a score for each of the dynamic programming paths, which score is dependent upon the similarity of the phonemes which are aligned along the path. Therefore, when propagating a path ending at point (i,j) to these other points, the dynamic programming process adds the respective "cost" of doing so to the cumulative score for the path ending at point (i,j), which is stored in a store (SCORE(i,j)) associated with that point.

- this cost includes insertion probabilities for any inserted phonemes, deletion probabilities for any deletions and decoding probabilities for a new alignment between a phoneme from the first phoneme sequence and a phoneme from the second phoneme sequence.

- the cumulative score is multiplied by the probability of inserting the given phoneme; when there is a deletion, the cumulative score is multiplied by the probability of deleting the phoneme; and when there is a decoding, the cumulative score is multiplied by the probability of decoding the two phonemes.

- the system stores a probability for all possible phoneme combinations in memory 47.

- the system sums, over all possible phonemes p, the probability of decoding the phoneme p as the first sequence phoneme d 1 i and as the second sequence phoneme d 2 j , weighted by the probability of phoneme p occurring unconditionally, i.e.: P d j 1

- a back tracking routine can be used to identify the best alignment of the phonemes in the two input phoneme sequences.

- the phoneme sequence determination unit 79 uses this alignment, to determine the sequence of phonemes which best represents the input phoneme sequences. The way in which this is achieved in this embodiment will be described later.

- the dynamic programming alignment unit 43 when two sequences of phonemes (for two renditions of the new word), are aligned.

- the scores associated with all the nodes are set to an appropriate initial value.

- the alignment unit 43 then propagates paths from the null start node ( ⁇ s ) to all possible start points defined by the dynamic programming constraints discussed above.

- the dynamic programming score for the paths that are started are then set to equal the transition score for passing from the null start node to the respective start point.

- the paths which are started in this way are then propagated through the array of lattice points defined by the first and second phoneme sequences until they reach the null end node ⁇ e .

- the alignment unit 78 processes the array of lattice points column by column in a raster like technique.

- step s149 the system initialises a first phoneme sequence loop pointer, i, and a second phoneme loop pointer, j, to zero. Then in step s151, the system compares the first phoneme sequence loop pointer i with the number of phonemes in the first phoneme sequence (Nseq1). Initially the first phoneme sequence loop pointer i is set to zero and the processing therefore proceeds to step s153 where a similar comparison is made for the second phoneme sequence loop pointer j relative to the total number of phonemes in the second phoneme sequence (Nseq2).

- step s155 the system propagates the path ending at lattice point (i,j) using the dynamic programming constraints discussed above. The way in which the system propagates the paths in step s155 will be described in more detail later.

- step s155 the loop pointer j is incremented by one in step s157 and the processing returns to step s153.

- step s159 the loop pointer j is reset to zero and the loop pointer i is incremented by one.

- step s151 a similar procedure is performed for the next column of lattice points.

- step s161 the loop pointer i is reset to zero and the processing ends.

- step s155 shown in Figure 9 the system propagates the path ending at lattice point (i,j) using the dynamic programming constraints discussed above.

- Figure 10 is a flowchart which illustrates the processing steps involved in performing this propagation step.

- the system sets the values of two variables mxi and mxj and initialises first phoneme sequence loop pointer i2 and second phoneme sequence loop pointer j2.

- the loop pointers i2 and j2 are provided to loop through all the lattice points to which the path ending at point (i,j) can propagate to and the variables mxi and mxj are used to ensure that i2 and j2 can only take the values which are allowed by the dynamic programming constraints.

- mxj is set equal to j plus mxhops, provided this is less than or equal to the number of phonemes in the second phoneme sequence, otherwise mxj is set equal to the number of phonemes in the second phoneme sequence (Nseq2).

- the system initialises the first phoneme sequence loop pointer i2 to be equal to the current value of the first phoneme sequence loop pointer i and the second phoneme sequence loop pointer j2 to be equal to the current value of the second phoneme sequence loop pointer j.

- step s219 the system compares the first phoneme sequence loop pointer i2 with the variable mxi. Since loop pointer i2 is set to i and mxi is set equal to i+4, in step s211, the processing will proceed to step s221 where a similar comparison is made for the second phoneme sequence loop pointer j2. The processing then proceeds to step s223 which ensures that the path does not stay at the same lattice point (i,j) since initially, i2 will equal i and j2 will equal j. Therefore, the processing will initially proceed to step s225 where the query phoneme loop pointer j2 is incremented by one.

- step s221 the incremented value of j2 is compared with mxj. If j2 is less than mxj, then the processing returns to step s223 and then proceeds to step s227, which is operable to prevent too large a hop along both phoneme sequences. It does this by ensuring that the path is only propagated if i2 + j2 is less than i + j + mxhops. This ensures that only the triangular set of points shown in Figure 7 are processed. Provided this condition is met, the processing proceeds to step s229 where the system calculates the transition score (TRANSCORE) from lattice point (i,j) to lattice point (i2,j2).

- TSNSCORE transition score

- step s233 the system compares TEMPSCORE with the cumulative score already stored for point (i2,j2) and the largest score is stored in SCORE (i2,j2) and an appropriate back pointer is stored to identify which path had the larger score.

- the processing then returns to step s225 where the loop pointer j2 is incremented by one and the processing returns to step s221.

- step s235 the loop pointer j2 is reset to the initial value j and the first phoneme sequence loop pointer i2 is incremented by one.

- the processing then returns to step s219 where the processing begins again for the next column of points shown in Figure 7. Once the path has been propagated from point (i,j) to all the other points shown in Figure 7, the processing ends.

- step s229 the transition score from one point (i,j) to another point (i2,j2) is calculated. This involves calculating the appropriate insertion probabilities, deletion probabilities and decoding probabilities relative to the start point and end point of the transition. The way in which this is achieved in this embodiment, will now be described with reference to Figures 11 and 12.

- Figure 11 shows a flow diagram which illustrates the general processing steps involved in calculating the transition score for a path propagating from lattice point (i,j) to lattice point (i2,j2).

- step s291 the system calculates, for each first sequence phoneme which is inserted between point (i,j) and point (i2,j2), the score for inserting the inserted phoneme(s) (which is just the log of probability PI( ) discussed above) and adds this to an appropriate store, INSERTSCORE.

- the processing then proceeds to step s293 where the system performs a similar calculation for each second sequence phoneme which is inserted between point (i,j) and point (i2,j2) and adds this to INSERTSCORE.

- step s295 the processing involved in step s295 to determine the deletion and/or decoding scores in propagating from point (i,j) to point (i2,j2) will now be described in more detail with reference to Figure 12.

- the system determines if the first phoneme sequence loop pointer i2 equals first phoneme sequence loop pointer i. If it does, then the processing proceeds to step s327 where a phoneme loop pointer r is initialised to one. The phoneme pointer r is used to loop through each possible phoneme known to the system during the calculation of equation (1) above.

- step s329 the system compares the phoneme pointer r with the number of phonemes known to the system, Nphonemes (which in this embodiment equals 43).

- step s331 the system determines the log probability of phoneme p r occurring (i.e. log P(p r )) and copies this to a temporary score TEMPDELSCORE. If first phoneme sequence loop pointer i2 equals annotation phoneme i, then the system is propagating the path ending at point (i,j) to one of the points (i,j+1), (i,j+2) or (i,j+3). Therefore, there is a phoneme in the second phoneme sequence which is not in the first phoneme sequence. Consequently, in step s333, the system adds the log probability of deleting phoneme p r from the first phoneme sequence (i.e.

- step s337 the processing proceeds to step s339 where the phoneme loop pointer r is incremented by one and then the processing returns to step s329 where a similar processing is performed for the next phoneme known to the system. Once this calculation has been performed for each of the 43 phonemes known to the system, the processing ends.

- step s325 the system determines that i2 is not equal to i, then the processing proceeds to step s341 where the system determines if the second phoneme sequence loop pointer j2 equals second phoneme sequence loop pointer j. If it does, then the processing proceeds to step s343 where the phoneme loop pointer r is initialised to one. The processing then proceeds to step s345 where the phoneme loop pointer r is compared with the total number of phonemes known to the system (Nphonemes). Initially r is set to one in step s343, and therefore, the processing proceeds to step s347 where the log probability of phoneme p r occurring is determined and copied into the temporary store TEMPDELSCORE.

- step s349 the system determines the log probability of decoding phoneme p r as first sequence phoneme d 1 i2 and adds this to TEMPDELSCORE. If the second phoneme sequence loop pointer j2 equals loop pointer j, then the system is propagating the path ending at point (i,j) to one of the points (i+1,j), (i+2,j) or (i+3,j). Therefore, there is a phoneme in the first phoneme sequence which is not in the second phoneme sequence. Consequently, in step s351, the system determines the log probability of deleting phoneme p r from the second phoneme sequence and adds this to TEMPDELSCORE.

- step s363 the log probability of decoding phoneme p r as first sequence phoneme d 1 i2 is added to TEMPDELSCORE.

- step s365 the log probability of decoding phoneme p r as second sequence phoneme d 2 j2 is determined and added to TEMPDELSCORE.

- step s367 The system then performs, in step s367, the log addition of TEMPDELSCORE with DELSCORE and stores the result in DELSCORE.

- the phoneme counter r is then incremented by one in step s369 and the processing returns to step s359.

- the phoneme sequence determination unit 45 determines, for each aligned pair of phonemes (d 1 m , d 2 n ) of the best alignment, the unknown phoneme, p, which maximises: P d m 1

- This phoneme, p is the phoneme which is taken to best represent the aligned pair of phonemes.

- the determination unit 45 identifies the sequence of canonical phonemes that best represents the two input phoneme sequences. In this embodiment, this canonical sequence is then output by the determination unit 45 and stored in the word to phoneme dictionary 23 together with the text of the new word typed in by the user.

- the dynamic programming alignment unit 43 aligns two sequences of phonemes and the way in which the phoneme sequence determination unit 45 obtains the sequence of phonemes which best represents the two input sequences given this best alignment.

- the dynamic programming alignment unit 43 should preferably be able to align any number of input phoneme sequences and the determination unit 45 should be able to derive the phoneme sequence which best represents any number of input phoneme sequences given the best alignment between them.

- a description will now be given of the way in which the dynamic programming alignment unit 43 aligns three input phoneme sequences together and how the determination unit 45 determines the phoneme sequence which best represents the three input phoneme sequences.

- Figure 13 shows a three-dimensional coordinate plot with one dimension being provided for each of the three phoneme sequences and illustrates the three-dimensional lattice of points which are processed by the dynamic programming alignment unit 43 in this case.

- the alignment unit 43 uses the same transition scores and phoneme probabilities and similar dynamic programming constraints in order to propagate and score each of the paths through the three-dimensional network of lattice points in the plot shown in Figure 13.

- the dynamic programming alignment unit 43 propagates dynamic programming paths from the null start node ⁇ e to each of the start points defined by the dynamic programming constraints. It then propagates these paths from these start points to the null end node ⁇ e by processing the points in the search space in a raster-like fashion.

- the control algorithm used to control this raster processing operation is shown in Figure 14. As can be seen from a comparison of Figure 14 with Figure 9, this control algorithm has the same general form as the control algorithm used when there were only two phoneme sequences to be aligned.

- step s463 in Figure 16 determines the deletion and/or decoding scores in propagating from point (i,j,k) to point (i2,j2,k2) will now be described in more detail with reference to Figure 17.

- the system determines (in steps s525 to s537) if there are any phoneme deletions from any of the three phoneme sequences by comparing i2, j2 and k2 with i, j and k respectively.

- FIGs 17a to 17d there are eight main branches which operate to determine the appropriate decoding and deletion probabilities for the eight possible situations. Since the processing performed in each situation is very similar, a description will only be given of one of the situations.

- step s541 r is set to one in step s541. Therefore the processing proceeds to step s545 where the system determines the log probability of phoneme p r occurring and copies this to a temporary score TEMPDELSCORE. The processing then proceeds to step s547 where the system determines the log probability of deleting phoneme p r in the first phoneme sequence and adds this to TEMPDELSCORE. The processing then proceeds to step s549 where the system determines the log probability of decoding phoneme p r as second sequence phoneme d 2 j2 and adds this to TEMPDELSCORE.

- the term calculated within the dynamic programming algorithm for decodings and deletions is similar to equation (1) but has an additional probability term for the third phoneme sequence.

- ⁇ r 1 N p P d i 1

- the dynamic programming alignment unit 78 identifies the path having the best score and uses the back pointers which have been stored for this path to identify the aligned phoneme triples (i.e. the aligned phonemes in the three sequences) which lie along this best path.

- the phoneme sequence determination unit 79 determines the phoneme, p, which maximises: P d m 1

- the dynamic programming alignment unit 43 aligns two or three sequences of phonemes.

- the addition of a further phoneme sequence simply involves the addition of a number of loops in the control algorithm in order to account for the additional phoneme sequence.

- the alignment unit 43 can therefore identify the best alignment between any number of input phoneme sequences by identifying how many sequences are input and then ensuring that appropriate control variables are provided for each input sequence.

- the determination unit 45 can then identify the sequence of phonemes which best represents the input phoneme sequences using these alignment results.

- the method of word training described earlier is used to initially create a single postulated phoneme sequence for a word taken from a large number of example phonetic decodings of the word.

- This postulated version is then used to score all the decodings of the word according to their similarity to the postulated form. Versions with similar scores are then clustered. If more than one cluster emerges then a postulated representation for each cluster is determined and the original decodings are re-scored and re-clustered relative to the new postulated representations. This process is then iterated until some convergence criteria is achieved. This process will now be explained in more detail with reference to Figures 18 to 20.

- Figure 18 shows in more detail the main components of the word model generation unit 31 of the third embodiment.

- the word generation unit 31 is similar to the word generation unit of the first embodiment.

- it includes a memory 41 which receives each phoneme sequence (D i ) output from the speech recognition engine 17 for each of the renditions of the new word input by the user.

- the phoneme sequences stored in the memory 41 are applied to the dynamic programming alignment unit 43 which determines the best alignment between the phoneme sequences in the manner described above.

- the phoneme sequence determination unit 45 determines (also in the manner described above) the sequence of phonemes which matches best with the input phoneme sequences.

- This best phoneme sequence (D best ) and the original-input phoneme sequences (D i ) are then passed to an analysis unit 61 which compares the best phoneme sequence with each of the input sequences to determine how well each of the input sequences corresponds to the best sequence. If the input sequence is the same length as the best sequence, then the analysis unit does this, in this embodiment, by calculating: P ( D i

- the analysis unit 61 analyses each of these probabilities using a clustering algorithm to identify if different clusters can be found within these probability scores - which would indicate that the input sequences include different pronunciations for the input word.

- This is schematically illustrated in the plot shown in Figure 19.

- Figure 19 has the probability scores determined in the above manner plotted on the x-axis with the number of training sequences having that score plotted on the y-axis. (As those skilled in the art will appreciate, in practice the plot will be a histogram, since it is unlikely that many scores will be exactly the same). The two peaks 71 and 73 in this plot indicate that there are two different pronunciations of the training word.

- the analysis unit 61 assigns each of the input phoneme sequences (D i ) to one of the different clusters.

- the analysis unit 61 then outputs the input phoneme sequences of each cluster back to the dynamic programming alignment unit 43 which processes the input phoneme sequences in each cluster separately so that the phoneme sequence determination unit 45 can determine a representative phoneme sequence for each of the clusters.

- the phoneme sequences of the or each other cluster are stored in the memory 47.

- the analysis unit 61 compares each of the input phoneme sequences with all of the cluster representative sequences and then re-clusters the input phoneme sequences. This whole process is then iterated until a suitable convergence criteria is achieved.

- the representative sequence for each cluster identified using this process may then be stored in the word to phoneme dictionary 23 together with the typed version of the word.

- the cluster representations are input to a phoneme sequence combination unit 63 which combines the representative phoneme sequences to generate a phoneme lattice using a standard forward/backward truncation technique.

- Figures 20 and 21 show two sequences of phonemes 75 and 77 represented by sequences A-B-C-D and A-E-C-D and Figure 21 shows the resulting phoneme lattice 79 obtained by combining the two sequences shown in Figure 20 using the forward/backward truncation technique.

- the phoneme lattice 79 output by the phoneme combination unit 63 is then stored in the word to phoneme dictionary 23 together with the typed version of the word.

- the dynamic programming alignment unit 78 used 1892 decoding/deletion probabilities and 43 insertion probabilities to score the dynamic programming paths in the phoneme alignment operation.

- these probabilities are determined in advance during a training session and are stored in the memory 47.

- a speech recognition system is used to provide a phoneme decoding of speech in two ways. In the first way, the speech recognition system is provided with both the speech and the actual words which are spoken. The speech recognition system can therefore use this information to generate the canonical phoneme sequence of the spoken words to obtain an ideal decoding of the speech. The speech recognition system is then used to decode the same speech, but this time without knowledge of the actual words spoken (referred to hereinafter as the free decoding).

- the phoneme sequence generated from the free decoding will differ from the canonical phoneme sequence in the following ways:

- the probability of decoding phoneme p as phoneme d is given by: P d

- p c dp n p where c dp is the number of times the automatic speech recognition system decoded d when it should have been p and n p is the number of times the automatic speech recognition system decoded anything (including a deletion) when it should have been p.

- phoneme has been used throughout the above description, the present application is not limited to its linguistic meaning, but includes the different sub-word units that are normally identified and used in standard speech recognition systems.

- phoneme covers any such sub-word unit, such as phones, syllables or katakana (Japanese alphabet).

- the dynamic programming alignment unit calculated decoding scores for each transition using equation (1) above.

- the dynamic programming alignment unit may be arranged, instead, to identify the unknown phoneme, p, which maximises the probability term within the summation and to use this maximum probability term as the probability of decoding the corresponding phonemes in the input sequences.

- the dynamic programming alignment unit would also preferably store an indication of the phoneme which maximised this probability with the appropriate back pointer, so that after the best alignment between the input phoneme sequences has been determined, the sequence of phonemes which best represents the input sequences can simply be determined by the phoneme sequence determination unit from this stored data.

- the insertion, deletion and decoding probabilities were calculated from statistics of the speech recognition system using a maximum likelihood estimate of the probabilities.

- other techniques such as maximum entropy techniques, can be used to estimate these probabilities. Details of a suitable maximum entropy technique can be found at pages 45 to 52 in the book entitled “Maximum Entropy and Bayesian Methods" published by Kluwer Academic publishers and written by John Skilling.

- equation (1) was calculated for each aligned pair of phonemes.

- the first sequence phoneme and the second sequence phoneme were compared with each of the phonemes known to the system.

- the aligned phonemes may only be compared with a subset of all the known phonemes, which subset is determined in advance from the training data.

- the input phonemes to be aligned could be used to address a lookup table which would identify the phonemes which need to be compared with them using equation (1) (or its multi-input sequence equivalent).

- the user input a number of spoken renditions of the new input word together with a typed rendition of the new word.

- it may be input as a handwritten version which is subsequently converted into text using appropriate handwriting recognition software.

- new word models were generated for use in a speech recognition system.

- the new word models are stored together with a text version of the word so that the text can be used in a word processing application.

- the word models may be used as a control command rather than being for use in generating corresponding text. In this case, rather than storing text corresponding to the new word model, the corresponding control action or command would be input and stored.

Description

- The present invention relates to the determination of phoneme or phoneme like models for words or commands which can be added to a word/command dictionary and used in speech processing related applications, such as speech recognition. The invention particularly relates to the generation of canonical and non-canonical phoneme sequences which represent the pronunciation of input words, which sequences can be used in speech processing applications.

- The use of speech recognition systems is becoming more and more popular due the increased processing power available to perform the recognition operation. Most speech recognition systems can be classified into small vocabulary systems and large vocabulary systems. In small vocabulary systems the speech recognition engine usually compares the input speech to be recognised with acoustic patterns representative of the words known to the system. In the case of large vocabulary systems, it is not practical to store a word model for each word known to the system. Instead, the reference patterns usually represent phonemes of a given language. In this way, the input speech is compared with the phoneme patterns to generate a sequence of phonemes representative of the input speech. A word decoder is then used to identify words within the sequence of phonemes using a word to phoneme dictionary.

- A problem with large vocabulary speech recognition systems is that if the user speaks a word which is not in the word dictionary, then a mis-recognition will occur and the speech recognition system will output the word or words which sound most similar to the out of vocabulary word actually spoken. This problem can be overcome by providing a mechanism which allows users to add new word models for out of vocabulary words. To date, this has predominantly been achieved by generating acoustic patterns representative of the out of vocabulary words. However, this requires the speech recognition system to match the input speech with two different types of model - phoneme models and word models, which slows down the recognition process. Other systems allow the user to add a phonetic spelling to the word dictionary in order to cater for out of vocabulary words. However, this requires the user to explicitly provide each phoneme for the new word and this is not practical for users who have a limited knowledge of the system and who do not know the phonemes which make up the word. An alternative technique would be to decode the new word into a sequence of phonemes using a speech recognition system and treat the decoded sequence of phonemes as being correct. However, as even the best systems today have an accuracy of less than 80%, this would introduce a number of errors which would ultimately lead to a lower recognition rate of the system.

- GB-A-2349260 describes a system for generating new reference models for adding to a speech recognition dictionary from three or more training signals. The system simultaneously compares and aligns the three or more training signals with each other and, from the alignment results, generates a reference model representative of the training signals.

- One aim of the present invention, as set out in the appended claims, is to provide an alternative technique for generating a phoneme or phoneme-like sequence representative of new words to be added to a word dictionary or command dictionary which may be used in, for example, a speech recognition system.

- Exemplary embodiments of the present invention will now be described in more detail with reference to the accompanying drawings in which:

- Figure 1 is a schematic block view of a computer which may be programmed to operate an embodiment of the present invention;

- Figure 2 is a schematic diagram of an overview of a speech recognition system embodying the present invention;

- Figure 3 is a schematic block diagram illustrating the main components of the word model generation unit which forms part of the speech recognition system shown in Figure 2;

- Figure 4 is a schematic diagram which shows a first and second sequence of phonemes representative of two renditions of a new word after being processed by the speech recognition engine shown in Figure 2, and a third sequence of phonemes which best represents the first and second sequence of phonemes, and which illustrates the possibility of there being phoneme insertions and deletions from the first and second sequence of phonemes relative to the third sequence of phonemes;

- Figure 5 schematically illustrates a search space created by the sequences of phonemes for the two renditions of the new word together with a start null node and an end null node;

- Figure 6 is a two-dimensional plot with the horizontal axis being provided for the phonemes corresponding to one rendition of the new word and the vertical axis being provided for the phonemes corresponding to the other rendition of the new word, and showing a number of lattice points, each corresponding to a possible match between a phoneme of the first rendition of the word and a phoneme of the second rendition of the word;

- Figure 7 schematically illustrates the dynamic programming constraints employed by the dynamic programming alignment unit which forms part of the word model generation unit shown in Figure 3;

- Figure 8 schematically illustrates the deletion and decoding probabilities which are stored for an example phoneme and which are used in the scoring during the dynamic programming alignment process performed by the alignment unit shown in Figure 3;

- Figure 9 is a flow diagram illustrating the main processing steps performed by the dynamic programming matching alignment unit shown in Figure 3;

- Figure 10 is a flow diagram illustrating the main processing steps employed to propagate dynamic programming paths from the null start node to the null end node;

- Figure 11 is a flow diagram illustrating the processing steps involved in determining a transition score for propagating a path during the dynamic programming matching process;

- Figure 12 is a flow diagram illustrating the processing steps employed in calculating scores for deletions and decodings of the first and second phoneme sequences corresponding to the word renditions;

- Figure 13 schematically illustrates a search space created by three sequences of phonemes generated for three renditions of a new word;

- Figure 14 is a flow diagram illustrating the main processing steps employed to propagate dynamic programming paths from the null start node to the null end node shown in Figure 13;

- Figure 15 is a flow diagram illustrating the processing steps employed in propagating a path during the dynamic programming process;

- Figure 16 is a flow diagram illustrating the processing steps involved in determining a transition score for propagating a path during the dynamic programming matching process;

- Figure 17a is a flow diagram illustrating a first part of the processing steps employed in calculating scores for deletions and decodings of phonemes during the dynamic programming matching process;

- Figure 17b is a flow diagram illustrating a second part of the processing steps employed in calculating scores for deletions and decodings of phonemes during the dynamic programming matching process;

- Figure 17c is a flow diagram illustrating a third part of the processing steps employed in calculating scores for deletions and decodings of phonemes during the dynamic programming matching process;

- Figure 17d is a flow diagram illustrating the remaining steps employed in the processing steps employed in calculating scores for deletions and decodings of phonemes during the dynamic programming matching process;

- Figure 18 is a schematic block diagram illustrating the main components of an alternative word model generation unit which may be used in the speech recognition system shown in Figure 2;

- Figure 19 is a plot illustrating the way in which probability scores vary with different pronunciations of input words;

- Figure 20 schematically illustrates two sequences of phonemes; and

- Figure 21 schematically illustrates a phoneme lattice formed by combining the two phoneme sequences illustrated in Figure 20.

- Embodiments of the present invention can be implemented using dedicated hardware circuits, but the embodiment that is to be described is implemented in computer software or code, which is run in conjunction with a personal computer. In alternative embodiments, the software may be run in conjunction with a workstation, photocopier, facsimile machine, personal digital assistant (PDA), web browser or the like.

- Figure 1 shows a personal computer (PC) 1 which is programmed to operate an embodiment of the present invention. A

keyboard 3, apointing device 5, amicrophone 7 and atelephone line 9 are connected to the PC 1 via an interface 11. Thekeyboard 3 and pointing device enable the system to be controlled by a user. Themicrophone 7 converts the acoustic speech signal of the user into an equivalent electrical signal and supplies this to the PC 1 for processing. An internal modem and speech receiving circuit (not shown) may be connected to thetelephone line 9 so that the PC 1 can communicate with, for example, a remote computer or with a remote user. - The program instructions which make the PC 1 operate in accordance with the present invention may be supplied for use with the PC 1 on, for example, a storage device such as a

magnetic disc 13 or by downloading the software from a remote computer over, for example, the Internet via the internal modem andtelephone unit 9. - The operation of the

speech recognition system 14 implemented in the PC 1 will now be described in more detail with reference to Figure 2. Electrical signals representative of the user's input speech from themicrophone 7 are applied to apreprocessor 15 which converts the input speech signal into a sequence of parameter frames, each representing a corresponding time frame of the input speech signal. The sequence of parameter frames output by thepreprocessor 15 are then supplied to thespeech recognition engine 17 where the speech is recognised by comparing the input sequence of parameter frames withphoneme models 19 to generate a sequence of phonemes representative of the input utterance. During thespeech recognition systems 14 normal mode of operation, this sequence of phonemes are input, via aswitch 20, to theword decoder 21 which identifies words within the generated phoneme sequence by comparing the phoneme sequence with those stored in a word tophoneme dictionary 23. Thewords 25 output by theword decoder 21 are then used by the PC 1 to either control the software applications running on the PC 1 or for insertion as text in a word processing program running on thePC 1. - In order to be able to add words to the word to

phoneme dictionary 23, thespeech recognition system 14 also has a training mode of operation. This is activated by the user applying an appropriate command through theuser interface 27 using thekeyboard 3 or thepointing device 5. This request to enter the training mode is passed to thecontrol unit 29 which causes theswitch 20 to connect the output of thespeech recognition engine 17 to the input of a wordmodel generation unit 31. At the same time, thecontrol unit 29 outputs a prompt to the user, via theuser interface 27, to provide several renditions of the word to be added. Each of these renditions is processed by the pre-processor 15 and thespeech recognition engine 17 to generate a plurality of sequences of phonemes representative of a respective rendition of the new word. These sequences of phonemes are input to the wordmodel generation unit 31 which processes them to identify the most probable phoneme sequence which could have been mis-recognised as all the training examples and this sequence is stored together with a typed version of the word input by the user, in the word tophoneme dictionary 23. After the user has finished adding words to thedictionary 23, thecontrol unit 29 returns thespeech recognition system 14 to its normal mode of operation by connecting the output of the speech recognition engine back to theword decoder 21 through theswitch 20. - Figure 3 shows in more detail the components of the word

model generation unit 31 discussed above. As shown, there is amemory 41 which receives each phoneme sequence output from thespeech recognition engine 17 for each of the renditions of the new word input by the user. After the user has finished inputting the training examples (which is determined from an input received from the user through the user interface 27), the phoneme sequences stored in thememory 41 are applied to the dynamicprogramming alignment unit 43 which, in this embodiment, uses a dynamic programming alignment technique to compare the phoneme sequences and to determine the best alignment between them. In this embodiment, thealignment unit 43 performs the comparison and alignment of all the phoneme sequences at the same time. The identified alignment between the input sequences is then input to a phonemesequence determination unit 45, which uses this alignment to determine the sequence of phonemes which matches best with the input phoneme sequences. - As those skilled in the art will appreciate, each phoneme sequence representative of a rendition of the new word can have insertions and deletions relative to this unknown sequence of phonemes which matches best with all the input sequences of phonemes. This is illustrated in Figure 4, which shows a possible matching between a first phoneme sequence (labelled d1 i, d1 i+1, d1 i+2 ...) representative of a first rendition of the new word, a second phoneme sequence (labelled d2 j, d2 j+1, d2 j+2 ...) representative of a second rendition of the new word and a sequence of phonemes (labelled pn, pn+1, Pn+2 ...) which represents a canonical sequence of phonemes of the text which best matches the two input sequences. As shown in Figure 4, the dynamic

programming alignment unit 43 must allow for the insertion of phonemes in both the first and second phoneme sequences (represented by the inserted phonemes d1 i+3 and d2 j+1) as well as the deletion of phonemes from the first and second phoneme sequences (represented by phonemes d1 i+1 and d2 j+2, which are both aligned with two phonemes in the canonical sequence of phonemes), relative to the canonical sequence of phonemes. - As those skilled in the art of speech processing know, dynamic programming is a technique which can be used to find the optimum alignment between sequences of features, which in this embodiment are phonemes. In the simple case where there are two renditions of the new word (and hence only two sequences of phonemes to be aligned), the dynamic

programming alignment unit 43 calculates the optimum alignment by simultaneously propagating a plurality of dynamic programming paths, each of which represents a possible alignment between a sequence of phonemes from the first sequence (representing the first rendition) and a sequence of phonemes from the second sequence (representing the second rendition). All paths begin at a start null node which is at the beginning of the two input sequences of phonemes and propagate until they reach an end null node, which is at the end of the two sequences of phonemes. - Figures 5 and 6 schematically illustrate the alignment which is performed and this path propagation. In particular, Figure 5 shows a rectangular coordinate plot with the horizontal axis being provided for the first phoneme sequence representative of the first rendition and the vertical axis being provided for the second phoneme sequence representative of the second rendition. The start null node øs is provided at the top left hand corner and the end null node øe is provided at the bottom right hand corner. As shown in Figure 6, the phonemes of the first sequence are provided along the horizontal axis and the phonemes of the second sequence are provided down the vertical axis. Figure 6 also shows a number of lattice points, each of which represents a possible alignment (or decoding) between a phoneme of the first phoneme sequence and a phoneme of the second phoneme sequence. For example,

lattice point 21 represents a possible alignment between first sequence phoneme d1 3 and second sequence phoneme d2 1. Figure 6 also shows three dynamic programming paths m1, m2 and m3 which represent three possible alignments between the first and second phoneme sequences and which begin at the start null node øs and propagate through the lattice points to the end null node øe. - In order to determine the best alignment between the first and second phoneme sequences, the dynamic

programming alignment unit 43 keeps a score for each of the dynamic programming paths which it propagates, which score is dependent upon the overall similarity of the phonemes which are aligned along the path. Additionally, in order to limit the number of deletions and insertions of phonemes in the sequences being aligned, the dynamic programming process places certain constraints on the way in which each dynamic programming path can propagate. - Figure 7 shows the dynamic programming constraints which are used in this embodiment. In particular, if a dynamic programming path ends at lattice point (i,j), representing an alignment between phoneme d1 i of the first phoneme sequence and phoneme d2 j of the second phoneme sequence, then that dynamic programming path can propagate to the lattice points (i+1,j), (i+2,j), (i+3,j), (i,j+1), (i+1,j+1), (i+2,j+1), (i,j+2), (i+1,j+2) and (i,j+3). These propagations therefore allow the insertion and deletion of phonemes in the first and second phoneme sequences relative to the unknown canonical sequence of phonemes corresponding to the text of what was actually spoken.

- As mentioned above, the dynamic programming alignment unit 78 keeps a score for each of the dynamic programming paths, which score is dependent upon the similarity of the phonemes which are aligned along the path. Therefore, when propagating a path ending at point (i,j) to these other points, the dynamic programming process adds the respective "cost" of doing so to the cumulative score for the path ending at point (i,j), which is stored in a store (SCORE(i,j)) associated with that point. In this embodiment, this cost includes insertion probabilities for any inserted phonemes, deletion probabilities for any deletions and decoding probabilities for a new alignment between a phoneme from the first phoneme sequence and a phoneme from the second phoneme sequence. In particular, when there is an insertion, the cumulative score is multiplied by the probability of inserting the given phoneme; when there is a deletion, the cumulative score is multiplied by the probability of deleting the phoneme; and when there is a decoding, the cumulative score is multiplied by the probability of decoding the two phonemes.

- In order to be able to calculate these probabilities, the system stores a probability for all possible phoneme combinations in

memory 47. In this embodiment, the deletion of a phoneme from either the first or second phoneme sequence is treated in a similar manner to a decoding. This is achieved by simply treating a deletion as another phoneme. Therefore, if there are 43 phonemes known to the system, then the system will store one thousand eight hundred and ninety two (1892 = 43 x 44) decoding/deletion probabilities, one for each possible phoneme decoding and deletion. This is illustrated in Figure 8, which shows the possible phoneme decodings which are stored for the phoneme /ax/ and which includes the deletion phoneme (0) as one of the possibilities. As those skilled in the art will appreciate, all the decoding probabilities for a given phoneme must sum to one, since there are no other possibilities. In addition to these decoding/deletion probabilities, 43 insertion probabilities (PI( )), one for each possible phoneme insertion, is also stored in thememory 47. As will be described later, these probabilities are determined in advance from training data. - In this embodiment, to calculate the probability of decoding a phoneme (d2 j) from the second phoneme sequence as a phoneme (d1 i) from the first phoneme sequence, the system sums, over all possible phonemes p, the probability of decoding the phoneme p as the first sequence phoneme d1 i and as the second sequence phoneme d2 j, weighted by the probability of phoneme p occurring unconditionally, i.e.:

- To illustrate the score propagations, an example will now be considered. In particular, when propagating from lattice point (i,j) to lattice point (i+2,j+1), the phoneme d1 i+1 from the first phoneme sequence is inserted relative to the second phoneme sequence and there is a decoding between phoneme d1 i+2 from the first phoneme sequence and phoneme d2 j+1 from the second phoneme sequence. Therefore, the score propagated to point (i+2,j+1) is given by:

- As those skilled in the art will appreciate, during this path propagation, several paths will meet at the same lattice point. In order that the best path is propagated, a comparison between the scores is made at each lattice point and the path having the best score is continued whilst the other path(s) is (are) discarded. In order that the best alignment between the two input phoneme sequences can be determined, where paths meet and paths are discarded, a back pointer is stored pointing to the lattice point from which the path which was not discarded, propagated. In this way, once the dynamic programming alignment unit 78 has propagated the paths through to the null end node and the path having the overall best score has been determined, a back tracking routine can be used to identify the best alignment of the phonemes in the two input phoneme sequences. The phoneme

sequence determination unit 79 then uses this alignment, to determine the sequence of phonemes which best represents the input phoneme sequences. The way in which this is achieved in this embodiment will be described later. - A more detailed description will now be given of the operation of the dynamic

programming alignment unit 43 when two sequences of phonemes (for two renditions of the new word), are aligned. Initially, the scores associated with all the nodes are set to an appropriate initial value. Thealignment unit 43 then propagates paths from the null start node (øs) to all possible start points defined by the dynamic programming constraints discussed above. The dynamic programming score for the paths that are started are then set to equal the transition score for passing from the null start node to the respective start point. The paths which are started in this way are then propagated through the array of lattice points defined by the first and second phoneme sequences until they reach the null end node øe. To do this, the alignment unit 78 processes the array of lattice points column by column in a raster like technique. - The control algorithm used to control this raster processing operation is shown in Figure 9. As shown, in step s149, the system initialises a first phoneme sequence loop pointer, i, and a second phoneme loop pointer, j, to zero. Then in step s151, the system compares the first phoneme sequence loop pointer i with the number of phonemes in the first phoneme sequence (Nseq1). Initially the first phoneme sequence loop pointer i is set to zero and the processing therefore proceeds to step s153 where a similar comparison is made for the second phoneme sequence loop pointer j relative to the total number of phonemes in the second phoneme sequence (Nseq2). Initially the loop pointer j is also set to zero and therefore the processing proceeds to step s155 where the system propagates the path ending at lattice point (i,j) using the dynamic programming constraints discussed above. The way in which the system propagates the paths in step s155 will be described in more detail later. After step s155, the loop pointer j is incremented by one in step s157 and the processing returns to step s153. Once this processing has looped through all the phonemes in the second phoneme sequence (thereby processing the current column of lattice points), the processing proceeds to step s159 where the loop pointer j is reset to zero and the loop pointer i is incremented by one. The processing then returns to step s151 where a similar procedure is performed for the next column of lattice points. Once the last column of lattice points has been processed, the processing proceeds to step s161 where the loop pointer i is reset to zero and the processing ends.

- In step s155 shown in Figure 9, the system propagates the path ending at lattice point (i,j) using the dynamic programming constraints discussed above. Figure 10 is a flowchart which illustrates the processing steps involved in performing this propagation step. As shown, in step s211, the system sets the values of two variables mxi and mxj and initialises first phoneme sequence loop pointer i2 and second phoneme sequence loop pointer j2. The loop pointers i2 and j2 are provided to loop through all the lattice points to which the path ending at point (i,j) can propagate to and the variables mxi and mxj are used to ensure that i2 and j2 can only take the values which are allowed by the dynamic programming constraints. In particular, mxi is set equal to i plus mxhops (which is a constant having a value which is one more than the maximum number of "hops" allowed by the dynamic programming constraints and in this embodiment is set to a value of four, since a path can jump at most to a phoneme that is three phonemes further along the sequence), provided this is less than or equal to the number of phonemes in the first phoneme sequence, otherwise mxi is set equal to the number of phonemes in the first phoneme sequence (Nseq1). Similarly, mxj is set equal to j plus mxhops, provided this is less than or equal to the number of phonemes in the second phoneme sequence, otherwise mxj is set equal to the number of phonemes in the second phoneme sequence (Nseq2). Finally, in step s211, the system initialises the first phoneme sequence loop pointer i2 to be equal to the current value of the first phoneme sequence loop pointer i and the second phoneme sequence loop pointer j2 to be equal to the current value of the second phoneme sequence loop pointer j.

- The processing then proceeds to step s219 where the system compares the first phoneme sequence loop pointer i2 with the variable mxi. Since loop pointer i2 is set to i and mxi is set equal to i+4, in step s211, the processing will proceed to step s221 where a similar comparison is made for the second phoneme sequence loop pointer j2. The processing then proceeds to step s223 which ensures that the path does not stay at the same lattice point (i,j) since initially, i2 will equal i and j2 will equal j. Therefore, the processing will initially proceed to step s225 where the query phoneme loop pointer j2 is incremented by one.

- The processing then returns to step s221 where the incremented value of j2 is compared with mxj. If j2 is less than mxj, then the processing returns to step s223 and then proceeds to step s227, which is operable to prevent too large a hop along both phoneme sequences. It does this by ensuring that the path is only propagated if i2 + j2 is less than i + j + mxhops. This ensures that only the triangular set of points shown in Figure 7 are processed. Provided this condition is met, the processing proceeds to step s229 where the system calculates the transition score (TRANSCORE) from lattice point (i,j) to lattice point (i2,j2). In this embodiment, the transition and cumulative scores are probability based and they are combined by multiplying the probabilities together. However, in this embodiment, in order to remove the need to perform multiplications and in order to avoid the use of high floating point precision, the system employs log probabilities for the transition and cumulative scores. Therefore, in step s231, the system adds this transition score to the cumulative score stored for the point (i,j) and copies this to a temporary store, TEMPSCORE.

- As mentioned above, in this embodiment, if two or more dynamic programming paths meet at the same lattice point, the cumulative scores associated with each of the paths are compared and all but the best path (i.e. the path having the best score) are discarded. Therefore, in step s233, the system compares TEMPSCORE with the cumulative score already stored for point (i2,j2) and the largest score is stored in SCORE (i2,j2) and an appropriate back pointer is stored to identify which path had the larger score. The processing then returns to step s225 where the loop pointer j2 is incremented by one and the processing returns to step s221. Once the second phoneme sequence loop pointer j2 has reached the value of mxj, the processing proceeds to step s235, where the loop pointer j2 is reset to the initial value j and the first phoneme sequence loop pointer i2 is incremented by one. The processing then returns to step s219 where the processing begins again for the next column of points shown in Figure 7. Once the path has been propagated from point (i,j) to all the other points shown in Figure 7, the processing ends.

- In step s229 the transition score from one point (i,j) to another point (i2,j2) is calculated. This involves calculating the appropriate insertion probabilities, deletion probabilities and decoding probabilities relative to the start point and end point of the transition. The way in which this is achieved in this embodiment, will now be described with reference to Figures 11 and 12.

- In particular, Figure 11 shows a flow diagram which illustrates the general processing steps involved in calculating the transition score for a path propagating from lattice point (i,j) to lattice point (i2,j2). In step s291, the system calculates, for each first sequence phoneme which is inserted between point (i,j) and point (i2,j2), the score for inserting the inserted phoneme(s) (which is just the log of probability PI( ) discussed above) and adds this to an appropriate store, INSERTSCORE. The processing then proceeds to step s293 where the system performs a similar calculation for each second sequence phoneme which is inserted between point (i,j) and point (i2,j2) and adds this to INSERTSCORE. As mentioned above, the scores which are calculated are log based probabilities, therefore the addition of the scores in INSERTSCORE corresponds to the multiplication of the corresponding insertion probabilities. The processing then proceeds to step s295 where the system calculates (in accordance with equation (1) above) the scores for any deletions and/or any decodings in propagating from point (i,j) to point (i2,j2) and these scores are added and stored in an appropriate store, DELSCORE. The processing then proceeds to step s297 where the system adds INSERTSCORE and DELSCORE and copies the result to TRANSCORE.

- The processing involved in step s295 to determine the deletion and/or decoding scores in propagating from point (i,j) to point (i2,j2) will now be described in more detail with reference to Figure 12. As shown, initially in step s325, the system determines if the first phoneme sequence loop pointer i2 equals first phoneme sequence loop pointer i. If it does, then the processing proceeds to step s327 where a phoneme loop pointer r is initialised to one. The phoneme pointer r is used to loop through each possible phoneme known to the system during the calculation of equation (1) above. The processing then proceeds to step s329, where the system compares the phoneme pointer r with the number of phonemes known to the system, Nphonemes (which in this embodiment equals 43). Initially r is set to one in step s327, therefore the processing proceeds to step s331 where the system determines the log probability of phoneme pr occurring (i.e. log P(pr)) and copies this to a temporary score TEMPDELSCORE. If first phoneme sequence loop pointer i2 equals annotation phoneme i, then the system is propagating the path ending at point (i,j) to one of the points (i,j+1), (i,j+2) or (i,j+3). Therefore, there is a phoneme in the second phoneme sequence which is not in the first phoneme sequence. Consequently, in step s333, the system adds the log probability of deleting phoneme pr from the first phoneme sequence (i.e. log P(ø|pr)) to TEMPDELSCORE. The processing then proceeds to step s335, where the system adds the log probability of decoding phoneme pr as second sequence phoneme d2 j2 (i.e. log P(d2 j2|pr)) to TEMPDELSCORE. The processing then proceeds to step s337 where a "log addition" of TEMPDELSCORE and DELSCORE is performed and the result is stored in DELSCORE.

- In this embodiment, since the calculation of decoding probabilities (in accordance with equation (1) above) involves summations and multiplications of probabilities, and since we are using log probabilities, this "log addition" operation effectively converts TEMDELSCORE and DELSCORE from log probabilities back to probabilities, adds them and then reconverts them back to log probabilities. This "log addition" is a well known technique in the art of speech processing and is described in, for example, the book entitled "Automatic Speech Recognition. The development of the (Sphinx) system" by Lee, Kai-Fu published by Kluwer Academic Publishers, 1989, at

pages 28 and 29. After step s337, the processing proceeds to step s339 where the phoneme loop pointer r is incremented by one and then the processing returns to step s329 where a similar processing is performed for the next phoneme known to the system. Once this calculation has been performed for each of the 43 phonemes known to the system, the processing ends. - If at step s325, the system determines that i2 is not equal to i, then the processing proceeds to step s341 where the system determines if the second phoneme sequence loop pointer j2 equals second phoneme sequence loop pointer j. If it does, then the processing proceeds to step s343 where the phoneme loop pointer r is initialised to one. The processing then proceeds to step s345 where the phoneme loop pointer r is compared with the total number of phonemes known to the system (Nphonemes). Initially r is set to one in step s343, and therefore, the processing proceeds to step s347 where the log probability of phoneme pr occurring is determined and copied into the temporary store TEMPDELSCORE. The processing then proceeds to step s349 where the system determines the log probability of decoding phoneme pr as first sequence phoneme d1 i2 and adds this to TEMPDELSCORE. If the second phoneme sequence loop pointer j2 equals loop pointer j, then the system is propagating the path ending at point (i,j) to one of the points (i+1,j), (i+2,j) or (i+3,j). Therefore, there is a phoneme in the first phoneme sequence which is not in the second phoneme sequence. Consequently, in step s351, the system determines the log probability of deleting phoneme pr from the second phoneme sequence and adds this to TEMPDELSCORE. The processing then proceeds to step s353 where the system performs the log addition of TEMPDELSCORE with DELSCORE and stores the result in DELSCORE. The phoneme loop pointer r is then incremented by one in step s355 and the processing returns to step s345. Once the processing steps s347 to s353 have been performed for all the phonemes known to the system, the processing ends.

- If at step s341, the system determines that second phoneme sequence loop pointer j2 is not equal to loop pointer j, then the processing proceeds to step s357 where the phoneme loop pointer r is initialised to one. The processing then proceeds to step s359 where the system compares the phoneme counter r with the number of phonemes known to the system (Nphonemes). Initially r is set to one in step s357, and therefore, the processing proceeds to step s361 where the system determines the log probability of phoneme pr occurring and copies this to the temporary score TEMPDELSCORE. If the loop pointer j2 is not equal to loop pointer j, then the system is propagating the path ending at point (i,j) to one of the points (i+1,j+1), (i+1,j+2) and (i+2,j+1). Therefore, there are no deletions, only insertions and decodings. The processing therefore proceeds to step s363 where the log probability of decoding phoneme pr as first sequence phoneme d1 i2 is added to TEMPDELSCORE. The processing then proceeds to step s365 where the log probability of decoding phoneme pr as second sequence phoneme d2 j2 is determined and added to TEMPDELSCORE. The system then performs, in step s367, the log addition of TEMPDELSCORE with DELSCORE and stores the result in DELSCORE. The phoneme counter r is then incremented by one in step s369 and the processing returns to step s359. Once processing steps s361 to s367 have been performed for all the phonemes known to the system, the processing ends.

- As mentioned above, after the dynamic programming paths have been propagated to the null end node øe, the path having the best cumulative score is identified and the dynamic

programming alignment unit 43 backtracks through the back pointers which were stored in step s233 for the best path, in order to identify the best alignment between the two input sequences of phonemes. In this embodiment, the phonemesequence determination unit 45 then determines, for each aligned pair of phonemes (d1 m, d2 n) of the best alignment, the unknown phoneme, p, which maximises:determination unit 45 identifies the sequence of canonical phonemes that best represents the two input phoneme sequences. In this embodiment, this canonical sequence is then output by thedetermination unit 45 and stored in the word tophoneme dictionary 23 together with the text of the new word typed in by the user. - A description has been given above of the way in which the dynamic

programming alignment unit 43 aligns two sequences of phonemes and the way in which the phonemesequence determination unit 45 obtains the sequence of phonemes which best represents the two input sequences given this best alignment. As those skilled in the art will appreciate, when training a new word, the user may input more than two renditions. Therefore, the dynamicprogramming alignment unit 43 should preferably be able to align any number of input phoneme sequences and thedetermination unit 45 should be able to derive the phoneme sequence which best represents any number of input phoneme sequences given the best alignment between them. A description will now be given of the way in which the dynamicprogramming alignment unit 43 aligns three input phoneme sequences together and how thedetermination unit 45 determines the phoneme sequence which best represents the three input phoneme sequences. - Figure 13 shows a three-dimensional coordinate plot with one dimension being provided for each of the three phoneme sequences and illustrates the three-dimensional lattice of points which are processed by the dynamic

programming alignment unit 43 in this case. Thealignment unit 43 uses the same transition scores and phoneme probabilities and similar dynamic programming constraints in order to propagate and score each of the paths through the three-dimensional network of lattice points in the plot shown in Figure 13. - A detailed description will now be given with reference to Figures 14 to 17 of the three-dimensional dynamic programming alignment carried out by the

alignment unit 43 in this case. As those skilled in the art will appreciate from a comparison of Figures 14 to 17 with Figures 9 to 12, the three-dimensional dynamic programming process that is performed is essentially the same as the two-dimensional dynamic programming process performed when there were only two input phoneme sequences, except with the addition of a few further control loops in order to take into account the extra phoneme sequence. - As in the first case, the scores associated with all the nodes are initialised and then the dynamic